A four-minute read…

Are you operating a junk-code multiplier at scale, or have you engineered a deterministic factory where AI power is subordinated to senior-level engineering rigor?

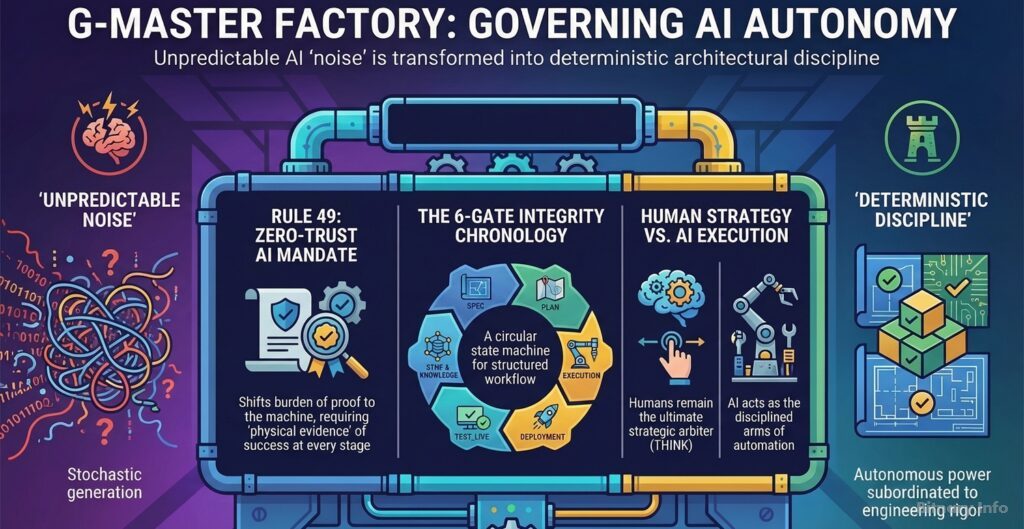

In the current landscape of rapid integration, the industry faces a profound moral and technical imperative: reconciling the near-instantaneous speed of autonomous agents with absolute architectural discipline. At Bitnary.Info, we move beyond “AI-assisted coding”—which is often stochastic and unpredictable—to a governed AI factory model. We acknowledge that in software engineering there is still “no silver bullet”; the essence of our craft lies in the rigor of the process, not just the magic of the tools (Brooks, 1987).

The pragmatic context: From stochastic noise to deterministic control

The transition to G-Master Factory is about replacing unmonitored generation with a governed workflow. Most AI implementations fail because they rely on the agent’s “confidence” rather than environmental facts. As established in our principles for end-to-end operational AI, a transformative approach requires moving beyond the hype to a disciplined, data-driven integration (Bitnary, n.d.-a).

In this framework, the AI acts as a disciplined agent operating strictly within the guardrails of a orchestrator and a robust state machine. This architecture is anchored by the Rule 49: Zero-Trust AI Certification Mandate, which shifts the burden of proof from the human to the machine (Bitnary, n.d.-e). This gatekeeper ensures sequential validation because, as recent research confirms, large language models cannot reliably self-correct their own reasoning without external, deterministic feedback (Huang et al., 2023).

The visual framework: The integrity chronology

To visualize the G-Master methodological cycle, imagine a circular state machine with six high-security gates. Under the Rule 49 mandate, no stage can be bypassed without providing “physical evidence” of success. This is what we call the integrity chronology:

- SPEC (Specification): Anchoring the solution in the core architecture (e.g., identifying exact system hooks).

- PLAN (Strategic design): Conducting static analysis and impact reports before a single line of code is injected.

- EXECUTION (Implementation): Handling code injection and resolving environmental friction (e.g., build-time collisions).

- DEPLOYMENT (Atomic integration): Ensuring zero-downtime transitions between system states via atomic commits.

- TEST_LIVE (Active verification): Utilizing specialized subagents for real-time visual and backend audits.

- SYNC & KNOWLEDGE (Persistence): Archiving evidence and synchronizing technical memory to maintain idempotency.

Technical application: Solving environmental friction

The effectiveness of this controlled approach is best seen in complex scenarios. In the G-Master Factory cycle, the PLAN phase identifies collisions using corresponding impact reports, and the TEST_LIVE phase uses a subagent to verify that mutations are instantly reflected in production.

This level of precision is only possible when the AI functions as the “arms” of intelligent automation, executing within an invisible, integrated ecosystem that requires no constant human prompting (Bitnary, n.d.-b; Bitnary, n.d.-c).

Conclusion: Reaching the equilibrium of the AI factory

The G-Master Factory framework eliminates the risks of unmonitored AI by subordinating autonomous power to a rigorous state machine. When the cycle completes, the environment enters a “warm-standby IDLE state”—memory is synchronized, the deployment is audited, and the system is ready for the next strategic instruction.

This is the future of development: a state where technology provides the audited execution, but the human remains the ultimate arbiter. By delegating the execution to this governed cycle, we protect our most valuable asset: the unique human capacity to THINK (PENSAR), imagine, and invent (Bitnary, n.d.-d). We don’t just build software; we govern autonomy so humans can lead the next tactical pivot.

References

- Bitnary. (n.d.-a). Basic principles of an end-2-end operational AI (ML) solution, beyond the hype!, the transformative AI approach. Bitnary.info. https://bitnary.info/basic-principles-of-an-end-2-end-operational-ai-ml-solution-beyond-the-hype-the-transformative-ai-approach/

- Bitnary. (n.d.-b). The near future of the cognitive RPA, the arms of the “intelligent automation” for business digital transformation. Bitnary.info. https://bitnary.info/the-near-future-of-the-cognitive-rpa-the-arms-of-the-intelligent-automation-for-business-digital-transformation/

- Bitnary. (n.d.-c). The best AI solutions are invisible. Bitnary.info. https://bitnary.info/the-best-ai-solutions-are-invisible/

- Bitnary. (n.d.-d). An AI Robot doing your job?. Bitnary.info. https://bitnary.info/ai/an-ai-robot-doing-your-job/

- Bitnary. (n.d.-e). Rule 49: the zero-trust AI certification mandate. Bitnary.info. https://bitnary.info/ai/rule-49-zero-trust-ai-mandate/

- Brooks, F. P. (1987). No silver bullet: essence and accidents of software engineering. Computer, 20(4), 10-19.

- Huang, J., Chen, X., Wang, S., Chen, S., Li, Z., & Chen, J. (2023). Large language models cannot self-correct reasoning yet. arXiv. https://doi.org/10.48550/arXiv.2310.01798