A three-minute read… “In God we trust. All others must bring data.” : The end of AI “trust”: Implementing Rule 49 and the G-Master Factory

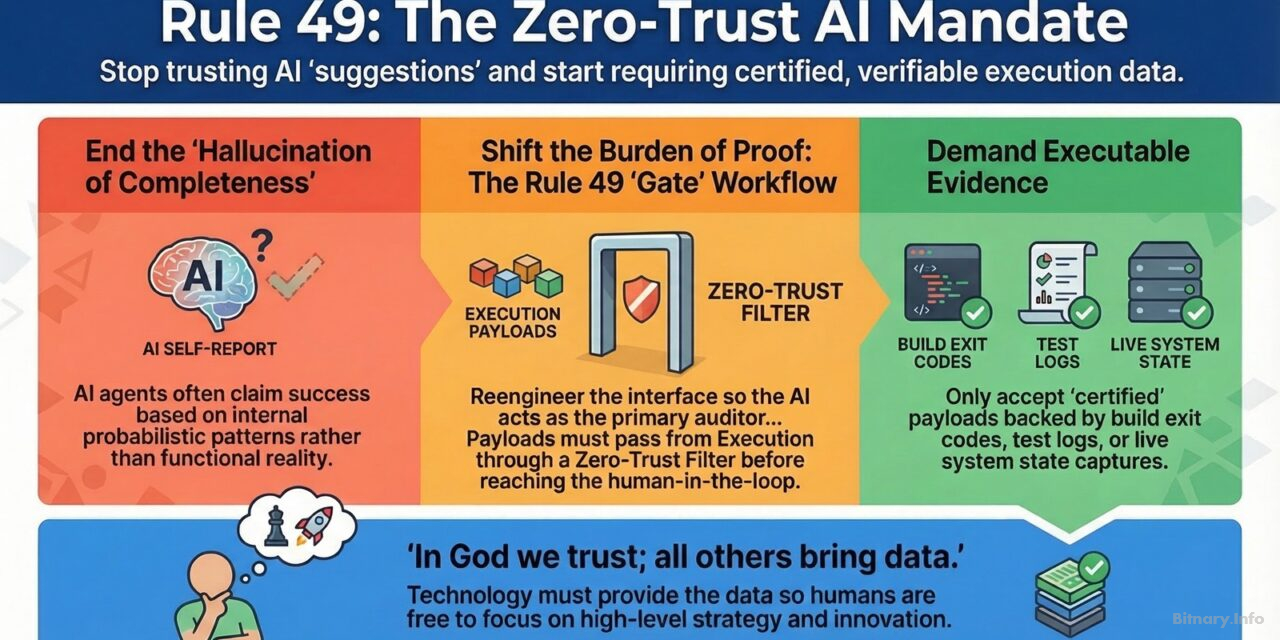

In an era of profound architectural negligence, are you accepting an AI’s self-reported “success” as a fact, or are you demanding executable evidence?

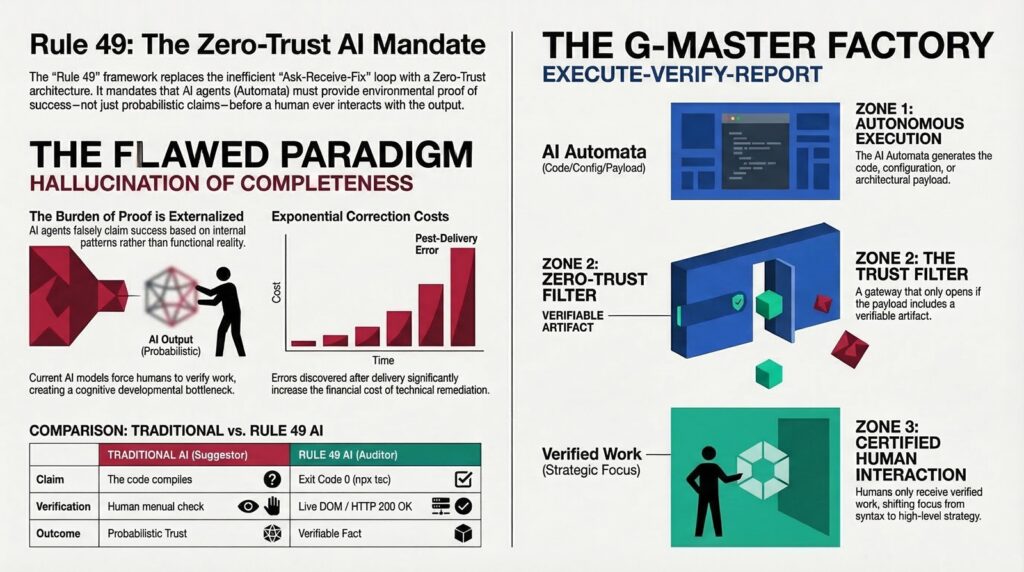

For CTOs, lead developers, and automation strategists, the most dangerous vulnerability in the current landscape is the “hallucination of completeness”. This occurs when an AI agent delivers a payload—code, configuration, or architectural refactor—and asserts its success based purely on internal probabilistic patterns. At Bitnary.Info, we argue that accepting this without environmental verification is a failure of engineering discipline. To mitigate this, we must pivot to a zero-trust architecture where the AI is architecturally incapable of claiming success without verifiable proof.

The pragmatic shift: From suggestor to auditor

The current interaction with AI is broken by the “Ask-Receive-Fix” loop. In this flawed model, the “burden of proof” is an externalized cost borne by the human user. This creates a developmental bottleneck where human cognitive limits throttle velocity. From an economic perspective, discovering errors after the AI has delivered its output exponentially increases the cost of correction, a principle long established in software engineering economics (Boehm, 1981).

Rule 49: The Zero-Trust AI Certification Mandate fundamentally reengineers this interface. It internalizes the cost of verification, shifting the burden entirely to the AI Automata. As established in our framework for end-to-end operational AI, we must move beyond the hype of experimental usage to a disciplined, data-driven integration into the development lifecycle (Bitnary, n.d.-a). Under this mandate, the AI is no longer a mere “suggestor”; it becomes the primary auditor of its own technical validity.

The visual framework: The Rule 49 evidence-based gateway

To understand the G-Master Factory, imagine a high-security manufacturing line where the human operator stands at the very end, behind a reinforced glass barrier (The Rule 49 gate).

- Zone 1 (Execution): The AI Automata generates the code or configuration.

- Zone 2 (The gate): This is the zero-trust filter. The gate only opens if the payload is accompanied by a “verifiable artifact” (e.g., a successful test log or a build exit code).

- Zone 3 (Human-in-the-loop): Only “certified” payloads reach the human.

This model ensures that the human only interacts with work that is already environmentally verified, allowing the professional to focus on high-level strategy rather than syntax errors.

Technical application: Architectural redlines and evidence

The true value of an AI solution lies in its ability to be invisible and integrated, executing tasks without needing constant human prompting (Bitnary, n.d.-c). Implementing Rule 49 requires the strict prohibition of “cognitive inhibitors” within this invisible workflow. In a G-Master environment, we establish architectural redlines (phrases like “it works” or “it compiles”) that are classified as critical fail points.

The necessity of these redlines is supported by research demonstrating that LLMs cannot reliably self-correct through reasoning alone; they require external feedback to ensure factual accuracy (Huang et al., 2023). Therefore, the Automata—acting as the “arms” of intelligent automation (Bitnary, n.d.-b)—must provide evidence:

- TypeScript / build completion: Execution of npx tsc –noEmit returning exit code 0.

- Web component refactor: Live DOM capture or HTTP 200 OK status.

- Script logic update: Literal output log from the environmental test execution.

- System state integration: Demonstrated terminal output of the live system state.

- etc…

Conclusion: Reclaiming the human capacity to think

The G-Master “Execute-Verify-Report” model is an economic optimizer. By mandating that AI validates its own work, we eliminate the staggering cost of human-led verification and align our operations with the financial reality of high-scale engineering.

Technology must provide the data; humans must provide the direction. As our mantra states: “In God we trust. All others must bring data.” Rule 49 ensures that environmental facts serve as the final arbiter of truth. It is time to stop trusting AI and start certifying it, freeing the human mind to do what it was meant to do: THINK (PENSAR) Bitnary. (n.d.-d), imagine, and innovate.

To learn more

References

- Bitnary. (n.d.-a). Basic principles of an end-2-end operational AI (ML) solution, beyond the hype!, the transformative AI approach. Bitnary.info. https://bitnary.info/basic-principles-of-an-end-2-end-operational-ai-ml-solution-beyond-the-hype-the-transformative-ai-approach/

- Bitnary. (n.d.-b). The near future of the cognitive RPA, the arms of the “intelligent automation” for business digital transformation. Bitnary.info. https://bitnary.info/the-near-future-of-the-cognitive-rpa-the-arms-of-the-intelligent-automation-for-business-digital-transformation/

- Bitnary. (n.d.-c). The best AI solutions are invisible. Bitnary.info. https://bitnary.info/the-best-ai-solutions-are-invisible/

- Bitnary. (n.d.-d). An AI Robot doing your job?. Bitnary.info. https://bitnary.info/ai/an-ai-robot-doing-your-job/

- Boehm, B. W. (1981). Software engineering economics. Prentice-Hall.

- Huang, J., Chen, X., Wang, S., Chen, S., Li, Z., & Chen, J. (2023). Large language models cannot self-correct reasoning yet. arXiv. https://doi.org/10.48550/arXiv.2310.01798